Simultaneous Learning from Human Pose and Object Cues for Real-Time Activity Recognition (ICRA 2020)

[pdf] [video] [slides] [bibtex]

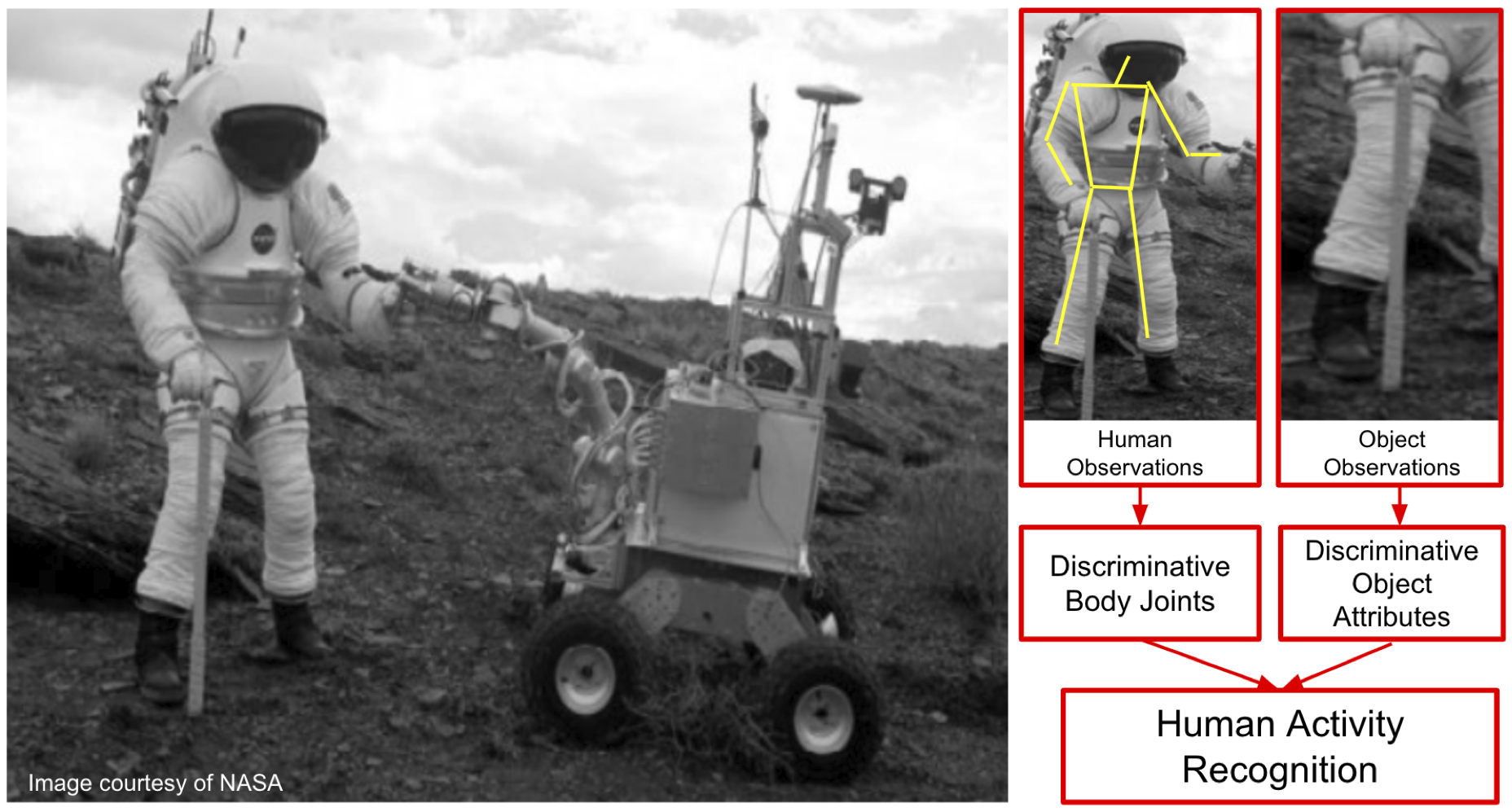

Real-time human activity recognition plays an essential role in real-world human-centered robotics applications, such as assisted living and human-robot collaboration. Although previous methods based on skeletal data to encode human poses showed promising results on real-time activity recognition, they lacked the capability to consider the context provided by objects within the scene and in use by the humans, which can provide a further discriminant between human activity categories. In this paper, we propose a novel approach to real-time human activity recognition, through simultaneously learning from observations of both human poses and objects involved in the human activity. We formulate human activity recognition as a joint optimization problem under a unified mathematical framework, which uses a regression-like loss function to integrate human pose and object cues and defines structured sparsity-inducing norms to identify discriminative body joints and object attributes. To evaluate our method, we perform extensive experiments on two benchmark datasets and a physical robot in a home assistance setting. Experimental results have shown that our method outperforms previous methods and obtains real-time performance for human activity recognition with a processing speed of 10^4 Hz.

Citation: Simultaneous Learning from Human Pose and Object Cues for Real-Time Activity Recognition. Brian Reily, Qingzhao Zhu, Christopher Reardon, and Hao Zhang. International Conference on Robotics and Automation (ICRA), 2020.